News

Meta gets its act together to prevent the misuse of generative AI

The company is working on new rules and measures to label content created with AI.

- February 7, 2024

- Updated: July 2, 2025 at 12:05 AM

With the increasing use of generative AI, Meta is working to establish new standards around the disclosure of artificial intelligence in its apps. The new policies will place more responsibility on users when it comes to declaring the use of AI in their content and establish new systems for detecting the use of AI through technical means.

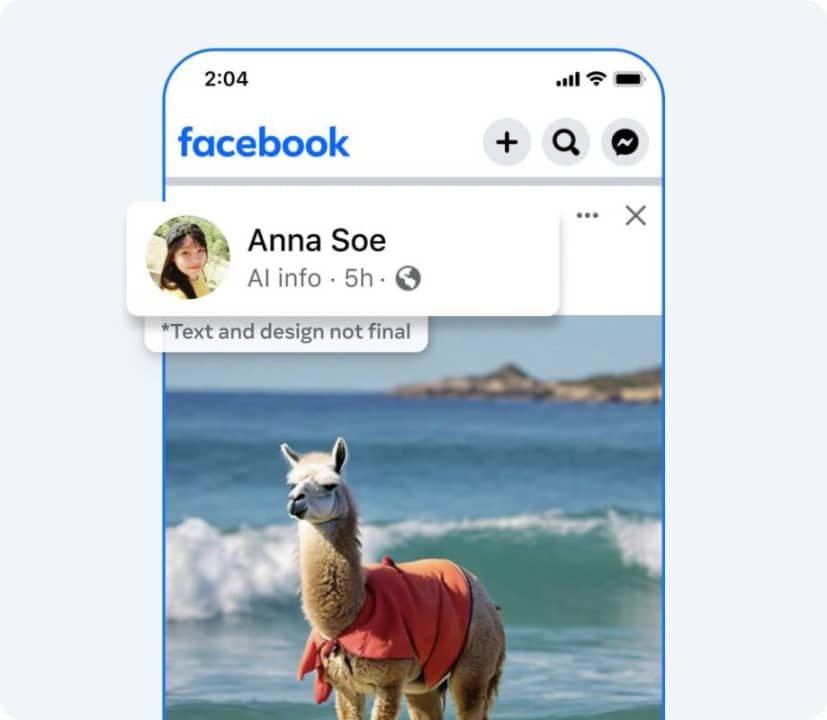

“We are creating industry-leading tools capable of identifying invisible markers at scale – specifically, the ‘AI-generated’ information from the C2PA and IPTC technical standards – to be able to label images from Google, OpenAI, Microsoft, Adobe, Midjourney, and Shutterstock as they implement their plans to add metadata to the images created by their tools,” says Nick Clegg, Meta’s Vice President of Global Affairs, in a blog post.

In theory, these technical detection measures will allow Meta and other platforms to label content created with generative AI wherever it appears so that all users are better informed about content created by artificial intelligence.

Among other things, the new measures will help reduce the spread of AI-generated misinformation (texts, images, videos or audios), which could prevent (or make it difficult) situations like the one experienced by singer Taylor Swift from happening again.

Publicist and audiovisual producer in love with social networks. I spend more time thinking about which videogames I will play than playing them.

Latest from Pedro Domínguez

- Fraudulent Websites Are on the Rise: Here’s How Avast Free Antivirus Keeps You Safe

- Unplug This Summer Without Compromising Your Digital Security — Get Protected with Avast Free Antivirus

- Have You Ever Stopped to Think About How Much Personal Information You Share Online Every Day?

- National Streaming Day: How On-Demand Entertainment Has Redefined Our Viewing Habits

You may also like

News

NewsColin Farrell made Tom Cruise repeat a scene from Minority Report… up to 42 times!

Read more

News

NewsThe creator of GTA 6 is very clear that AI will not be able to compete against them

Read more

News

NewsThat time James Cameron met his idol… and he insisted on analyzing 'True Lies'

Read more

News

NewsColin Farrell showed up drunk to the filming of 'Minority Report'. Tom Cruise didn't find it funny at all

Read more

News

NewsSharon Stone defends Sydney Sweeney from all the criticism over the famous jeans ad

Read more

News

NewsHello Kitty is coming to the movies, and we already know when!

Read more