Instagram has announced new measures to help protect its underage users (over 13 years old), including increased restrictions on self-harm related content, new prompts to make experiences more private, and other advanced actions.

Firstly, Instagram says it is taking further steps to limit exposure to self-injury related content, based on the negative impact it can have on younger users.

“Let’s take the example of someone who posts about their constant struggle with self-harm thoughts. It is an important story and can help destigmatize these issues, but it is a complex topic and may not be suitable for all young people,” Instagram states. “Now, we will start removing this type of content from teenagers’ experiences on Instagram and Facebook, as well as other types of content inappropriate for their age.”

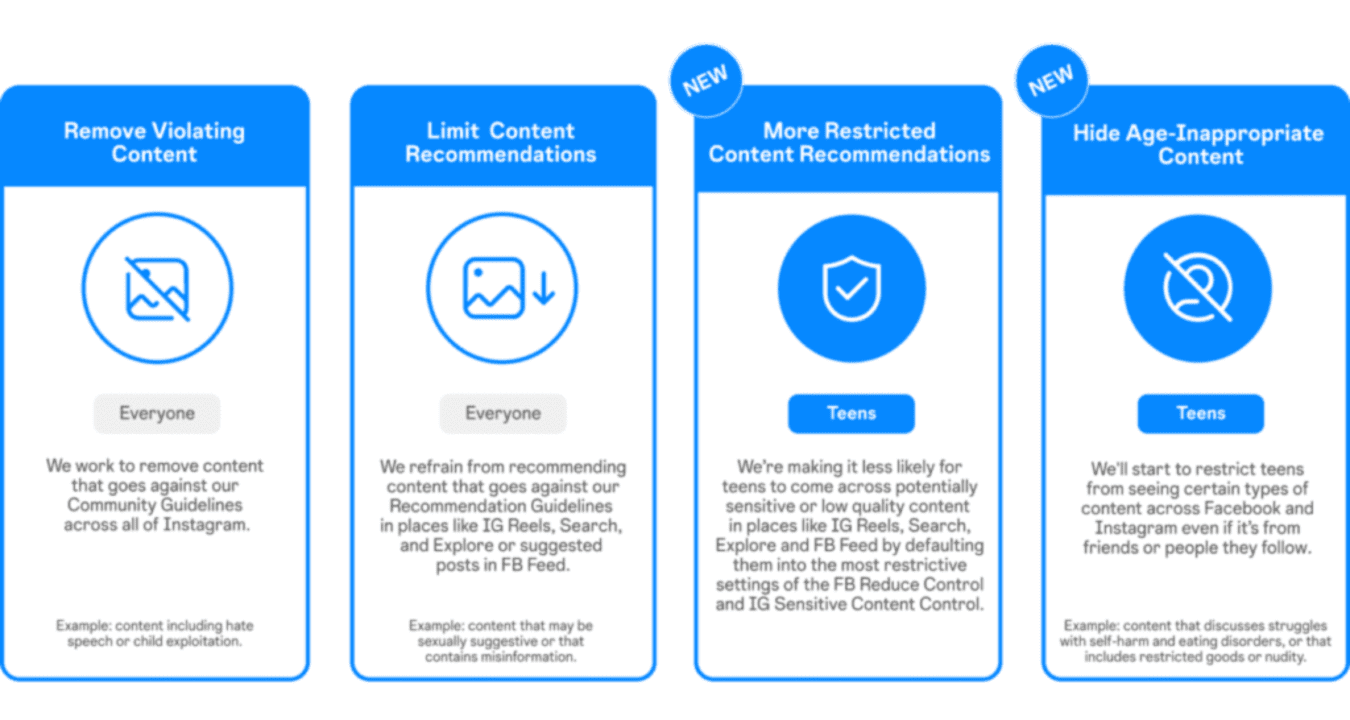

Instagram says it already limits underage users’ access to some recommendations related to self-harm content within Reels and Explore. Now, it will also do so in the Feed and in Stories, even when that content has been posted by a profile they follow. This new process will provide more protection while allowing Meta to offer links to relevant support groups when possible.

In addition, Meta will also now include all underage users in its more restrictive content settings, both on Facebook and Instagram. New teenage users are already subject to the most restrictive settings when they register on the app, but now Meta will extend it to all active teenagers on its platforms.

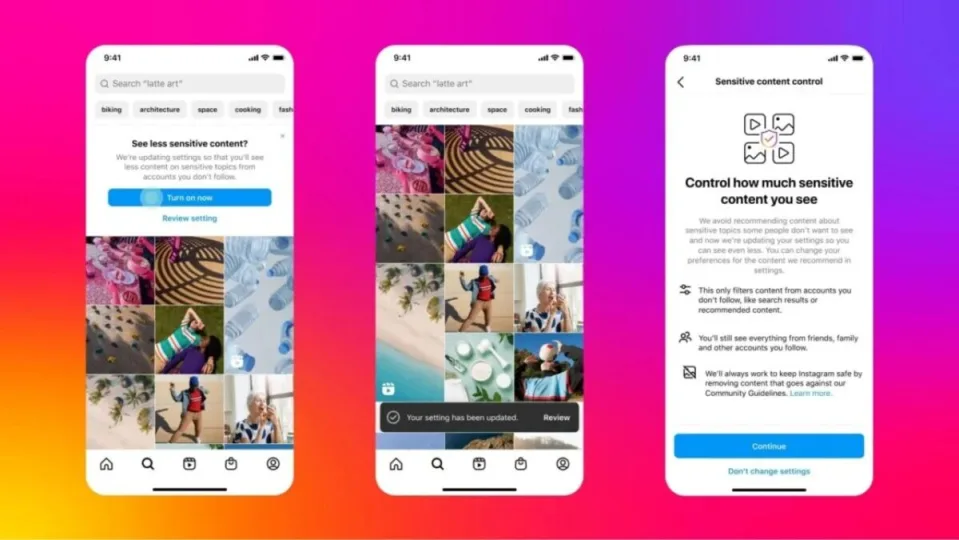

Finally, Instagram is sending new notifications to all underage users, encouraging them to update their settings to enjoy a more private experience.

The new measures have been implemented based on the advice of experts in child and adolescent health, who have expressed their concern about the rate of exposure in the application and its impact on vulnerable users.