News

The shocking truth: AIs are vulnerable to cyberattacks, but these defenses could save them!

Malefactors are on the prowl and AI can compromise our security if appropriate measures are not taken.

- February 25, 2023

- Updated: July 2, 2025 at 2:56 AM

AIs are here to stay. From the famous chatbots with which you can have conversations, such as the popular ChatGPT, its recent integration in Bing and Bard, Google’s new AI; to AIs that generate images, such as DALL-E 2, Midjourney or Dream, and others that help us in other aspects of our lives, such as when cooking, there are already many possibilities for AI, and its progress is increasingly promising, although it also involves risks.

One of the most recurrent fears in the AI world is the possibility that AIs can be “hacked”, just like a normal program. Zdnet has looked into this issue and interviewed several professionals who give their views on what AI could bring in the future, including its potential risks, as well as how to prevent potential problems.

In this regard, Bruce Draper, the program manager at the Defense Advanced Research Projects Agency (DARPA), the U.S. Department of Defense’s research and development agency, is one of the most qualified people to have a good understanding of all the threats we could be subjected to if evildoers had access to the AIs around us.

“The benefits are real, but we have to do it with our eyes open: there are risks and we have to defend our AI systems,” says Draper. “As artificial intelligence becomes more pervasive, it’s being used in all kinds of industries and environments, and they all become potential attack surfaces. So we want to give everyone the opportunity to defend themselves.”

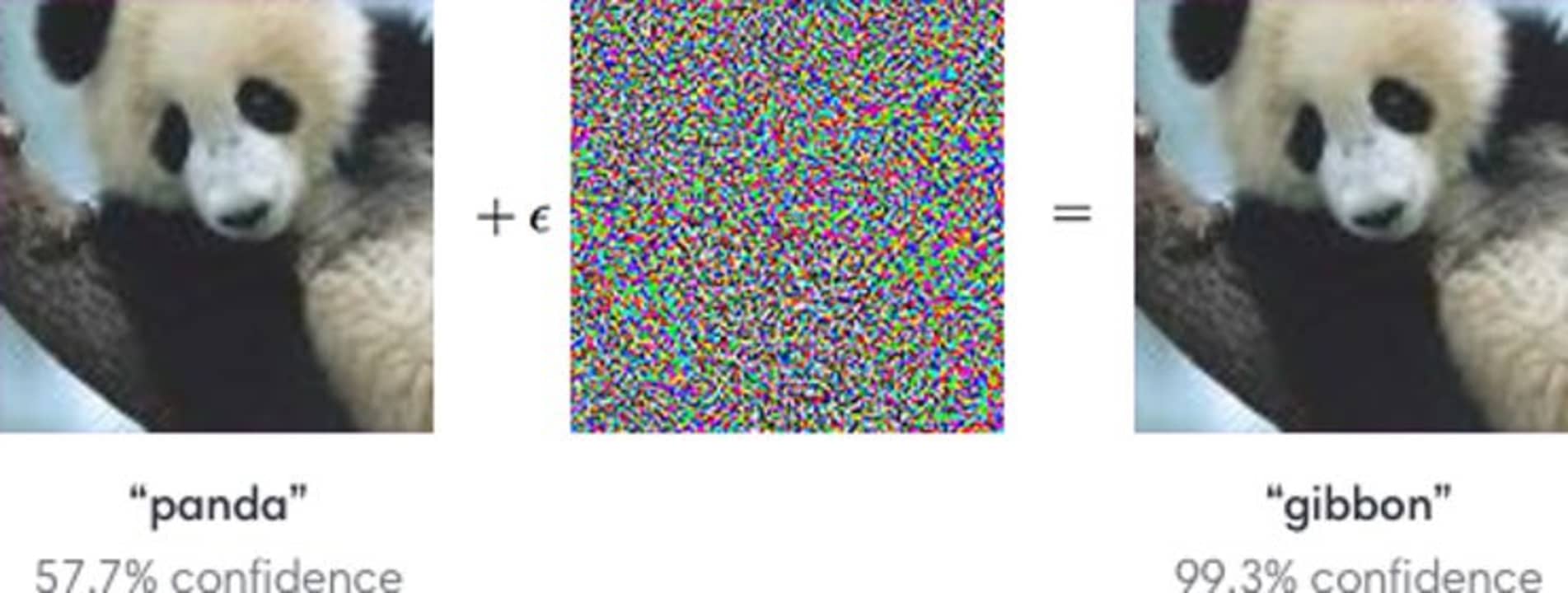

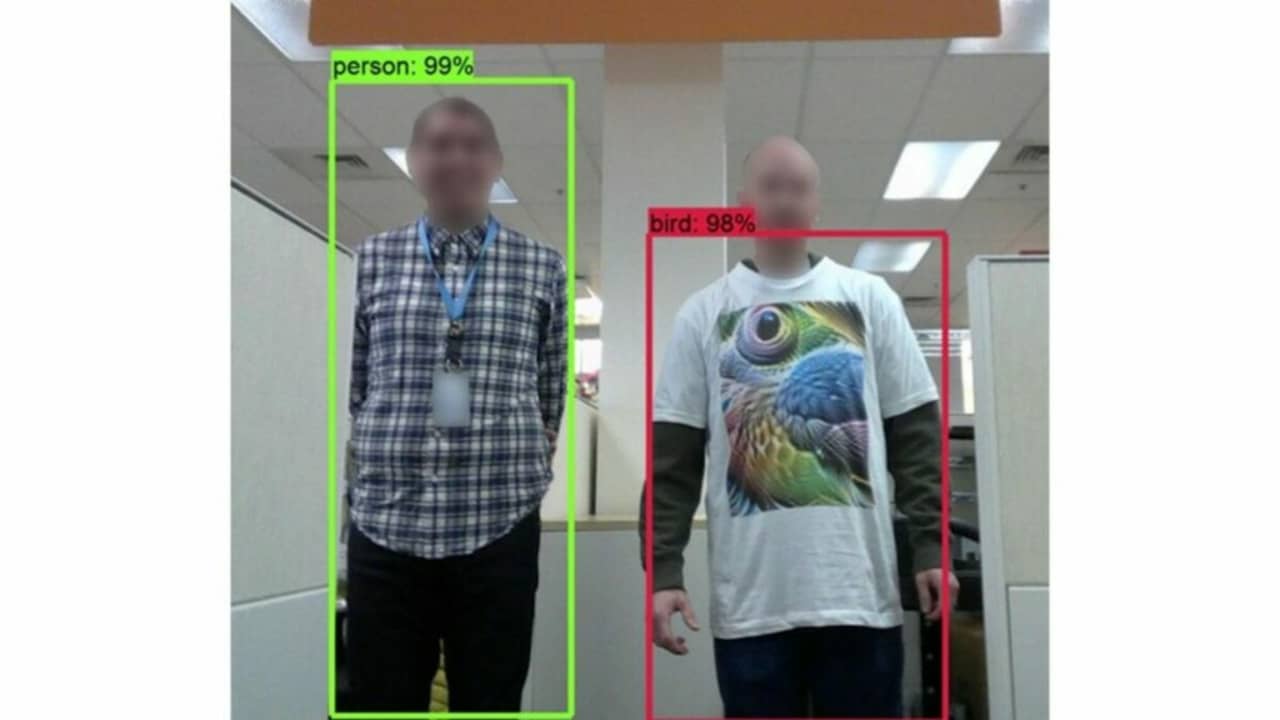

By means of a tactic known as “adversarial attack“, a user who knows what he is doing could trick an AI by introducing a small change as input so that later, after performing several “contaminated” logical reasonings, a large change is provoked as output. In this way, we could make an AI believe that the image of a cat is really a dog, although this type of manipulation could be more serious when we think of more serious aspects, such as security.

In view of this, programs such as DARPA’s GARD (Guaranteeing AI Robustness Against Deception) aim to develop tools that can protect AIs from attacks, as well as evaluate their defense against any attempt at manipulation or hacking.

GARD uses different types of resources to assess the robustness of AI models and their defenses against current and future adversarial attacks. Key components of the project include Armory, a virtual platform on Github that serves as a testbed, and the Adversarial Robustness Toolbox (ART), a set of tools also on Github that developers can use to defend their AIs against such threats.

The advancement of this type of defense and prevention technologies is of vital importance, since, in a world where AIs are and will be even more present in our day-to-day lives, including our homes, our cars and even the establishments we visit, any attack could result in an entire city being paralyzed or our most intimate data, including banking data, being at the service of malefactors.

Publicist and audiovisual producer in love with social networks. I spend more time thinking about which videogames I will play than playing them.

Latest from Pedro Domínguez

You may also like

News

NewsThe Helldivers movie has a director who is known for being fast and furious

Read more

News

NewsBatman’s most stubborn villain receives the first trailer for his imminent movie

Read more

News

NewsWarner Bros. sets a release date for the sequel to La Llorona and the anticipated spin-off of Weapons

Read more

News

NewsDune: part three shows its first images

Read more

News

NewsThe first trailer for the new installment of the Evil Dead franchise is presented during CinemaCon

Read more

News

NewsMastering the New Google Workspace: 5 Gemini AI Tricks You Didn’t Know You Could Do

Read more