News

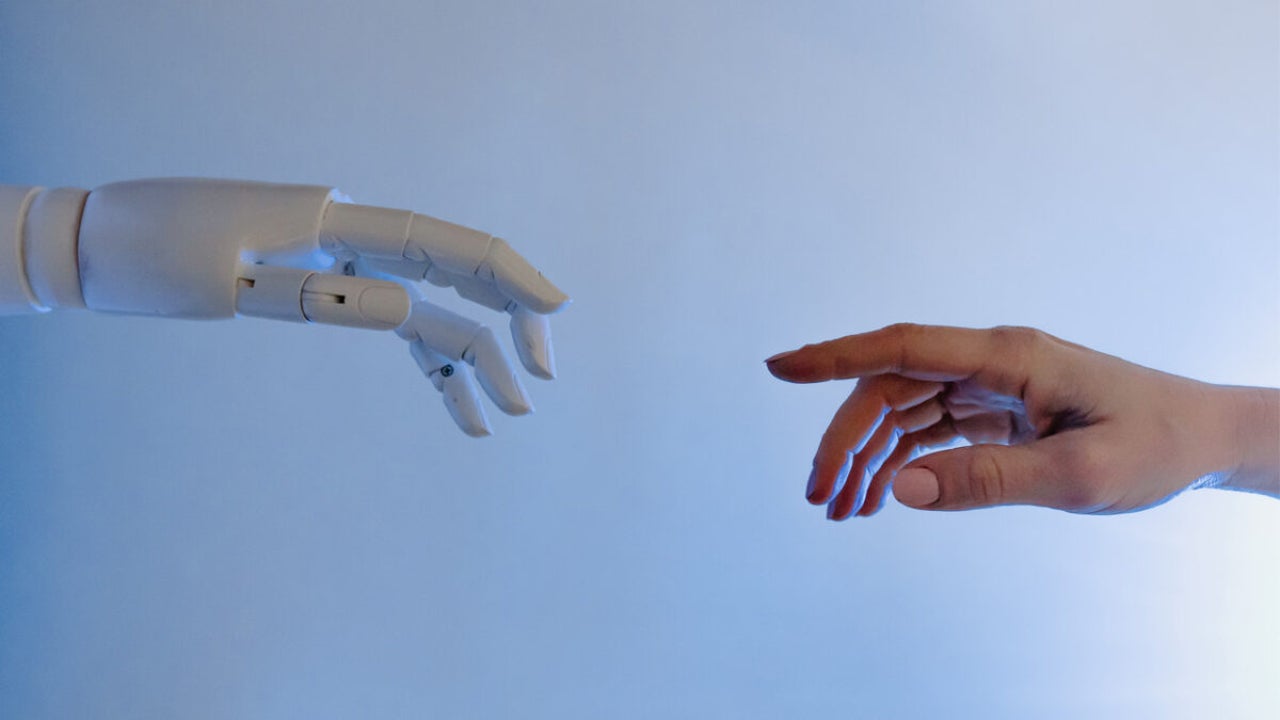

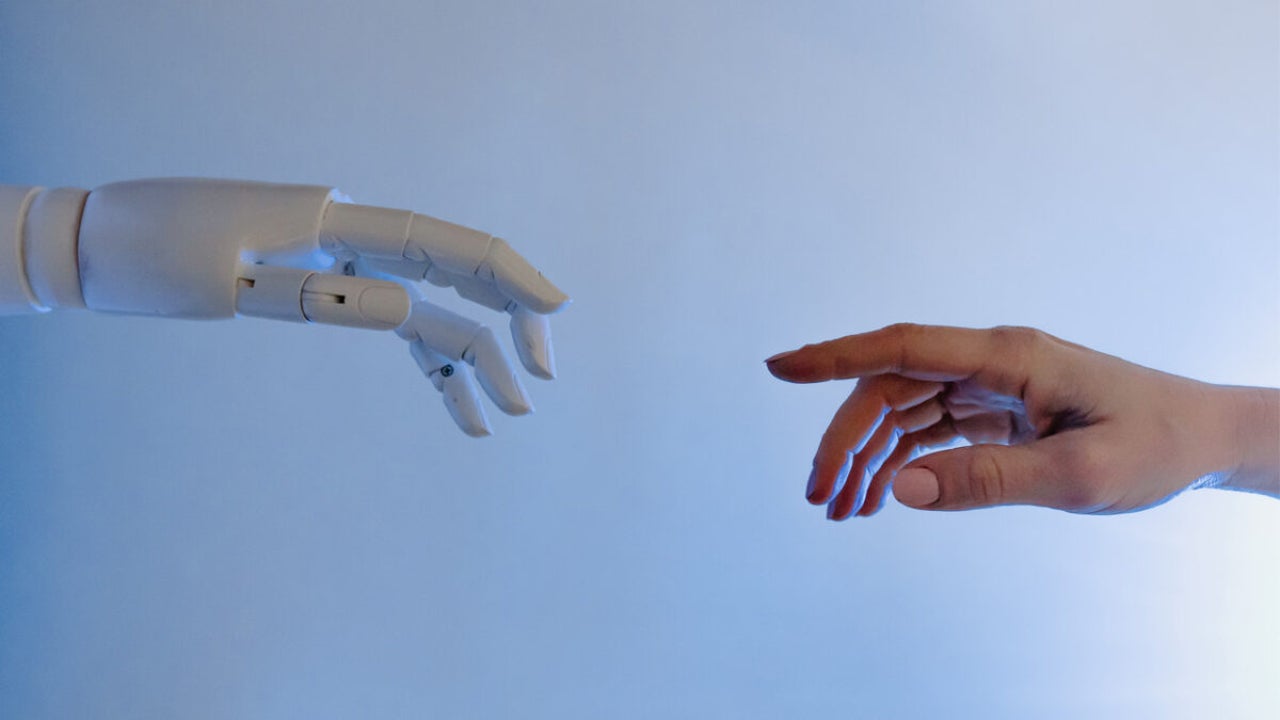

Is Chat GPT the world’s first (truly) useful chatbot? ?

- December 4, 2022

- Updated: July 2, 2025 at 3:16 AM

OpenAI has released a prototype chatbot with an eye-catching new capability, demonstrating many of the stumbling blocks this technology still has to overcome. It adapted GPT-3.5 to create ChatGPT and trained it to provide answers in a conversational mode. The root model tried to predict what text would follow a given string of words, while ChatGPT tries to interact with users more humanly.

ChatGPT does an outstanding job, clearly showing how far chatbots have come in recent years but it still stumbles at times.

Human trainers created the bot. These trainers rated and ranked the answers given by the bot. These responses were then fed back into the bot, so its solutions slowly started to match the trainers’ preferences. This is called reinforcement learning and is a standard way of training AI systems.

Ensuring that a chatbot can reign in its offensive proclivity has restricted its use. OpenAI has given ChatGPT the ability to refuse to answer potentially harmful questions, such as how to make a bomb or speak in a racist or hateful manner. OpenAI sees this as a significant step in providing a safe AI model for everyone, hoping it will not take the same route as Microsoft’s Tay AI chatbot.

Users have already found ways to circumvent these safety features by including this type of information in a poem or asking the bot to create an imaginary scenario for a film. It’s human nature to find a way around a rule, but there are also many examples of how CHatGPT has provided exceptional answers to many queries. Many have fun additions, such as asking ChatGPT to speak as a 1940s gangster or pirate.

Not responding to harmful questions has improved the way the chatbot works, but it has not stopped the bot from making things up and lying through its teeth! ChatGPT is very good at making up entirely fictitious information and sounding exceedingly authoritative while doing so.

Michael Nielsen tweeted how ChatGPT created an entire book, supposedly written by the philosopher Harold Coward, that does not exist. This is a crucial problem with bots today. How do you trust most of their output if they can convincingly lie?

Latest from Shaun M Jooste

You may also like

News

NewsThis is the game that no one expected anything from, but it's blowing up on Steam

Read more

News

NewsRevolut requests a banking license in the US amid expansion plans

Read more

News

NewsDaisy Edgar-Jones in final negotiations to star in the adaptation of this young adult book

Read more

News

NewsThese are the first confirmed stars that will appear at the Oscars

Read more

News

NewsThe series with the most potential on Disney+ is renewed for a third season

Read more

News

NewsFinally, we have the hilarious trailer for season 2 of one of the best Netflix series

Read more