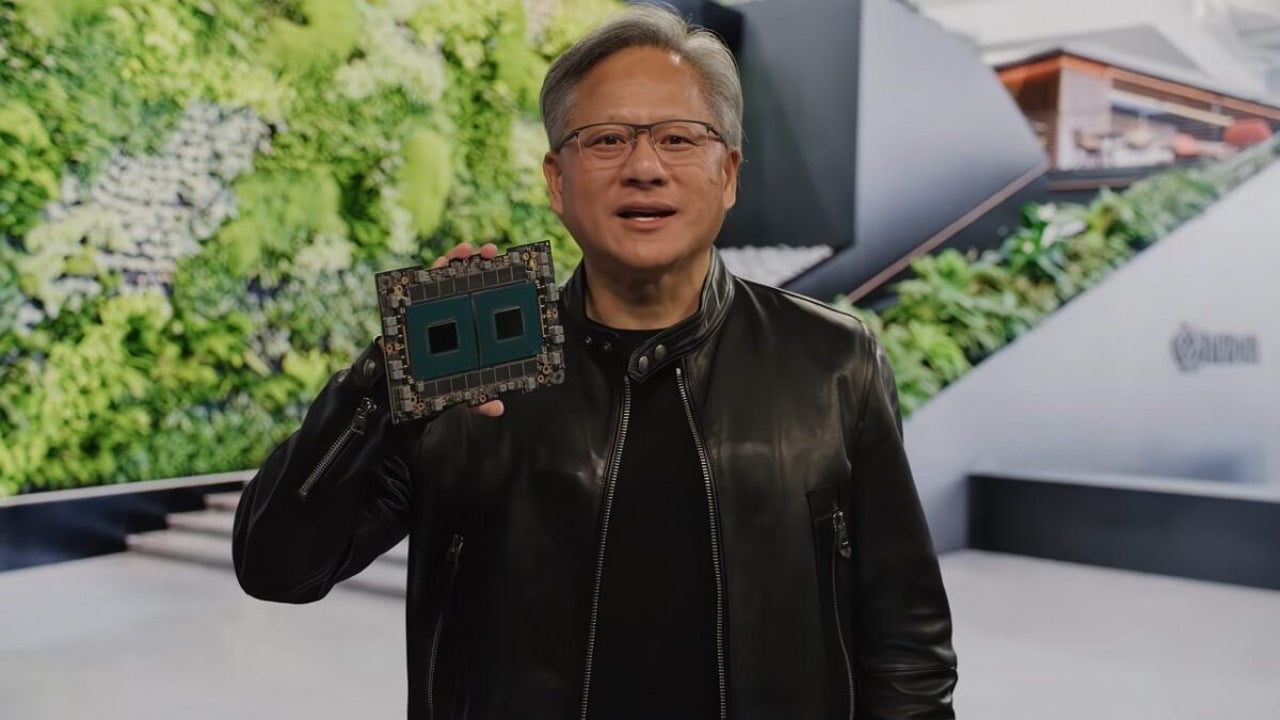

These are the first servers that will feature Nvidia’s new AI GB200 graphics cards

Microsoft has stated that its Azure platform will be the first in the world to run NVIDIA's new Blackwell GB200 AI servers

- October 10, 2024

- Updated: January 5, 2025 at 6:42 AM

Microsoft has showcased today its new Blackwell GB200 server from NVIDIA for the Azure AI cloud computing platform. And if this sounds strange to you, we’ll explain it.

The official Microsoft Azure management has announced that it is the first cloud system to feature AI servers with GB200 technology to scale advanced AI models.

Microsoft Azure offers its customers services such as virtual machines, AI processing, etc. to manage applications. This allows users to scale and update their applications without the need to own the hardware. With the use of the latest NVIDIA Blackwell B200 GPUs, Azure is enhancing the user experience by providing more performance than ever before.

The world’s most powerful servers in terms of AI

The AI servers powered by GB200 will use the B200 GPUs, the flagship of the data center, which utilize the GB200 array and offer 192 GB of HBM3e memory.

The GPU is a high-performance chip designed for advanced and heavy workloads, such as deep learning, training large AI models, and processing large datasets, being more efficient than its predecessors.

Thanks to the use of B200 GPUs, AI models can be trained faster on Azure, ensuring their leading performance among all other cloud computing platforms. As seen in the tweet image, the company has a server rack with several B200 GPUs. We do not know how many B200 GPUs are used in this server nor how many of them the company has already deployed.

The server is being cooled by liquid cooling solutions to maintain lower temperatures, which appears to be the initial testing phase for Microsoft to see how to implement liquid cooling in commercial servers.

It should be noted that the server shown is not the GB200 NVL72, which NVIDIA has prepared to leverage the power of 36 Grace CPUs and 72 B200 GPUs. That rack is incredibly powerful for building a robust platform that can produce up to 3240 TFLOPS of FP64 Tensor Core performance and will be used in Taiwan’s fastest supercomputer by Foxconn.

Journalist specialized in technology, entertainment and video games. Writing about what I'm passionate about (gadgets, games and movies) allows me to stay sane and wake up with a smile on my face when the alarm clock goes off. PS: this is not true 100% of the time.

Latest from Chema Carvajal Sarabia

You may also like

Jennifer Aniston has an unexpected cameo in the second episode of The Last of Us

Read more

Helldivers 2 is an example of success for Sony

Read more

John Cena and Idris Elba team up in the fun action movie "Heads of State"

Read more

They are using Zoom to distribute malware: Here’s how to stay safe

Read more

PlayStation 5 will receive themes to customize the console

Read more

No Plans To Relocate Ev Production: General Motors Stays Committed To Mexico

Read more